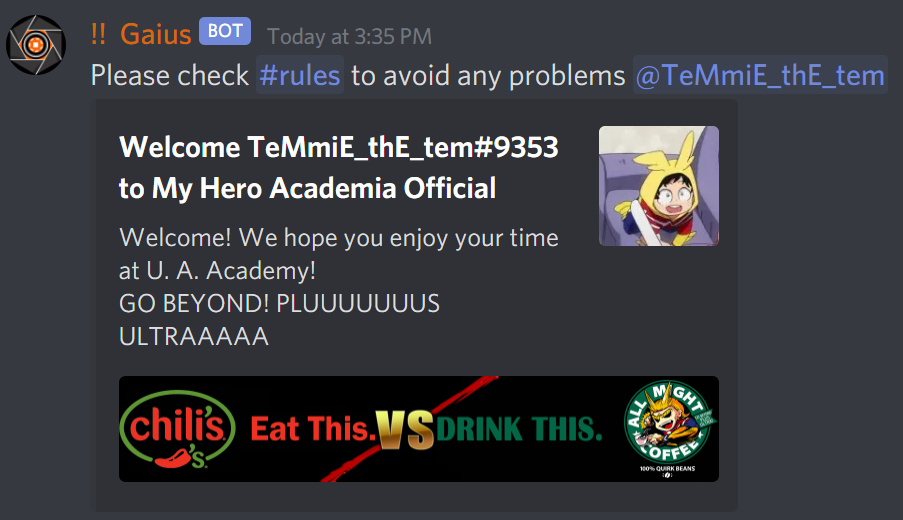

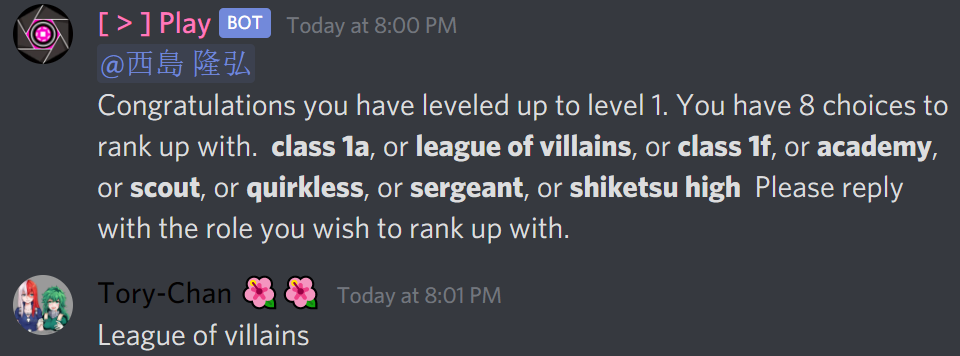

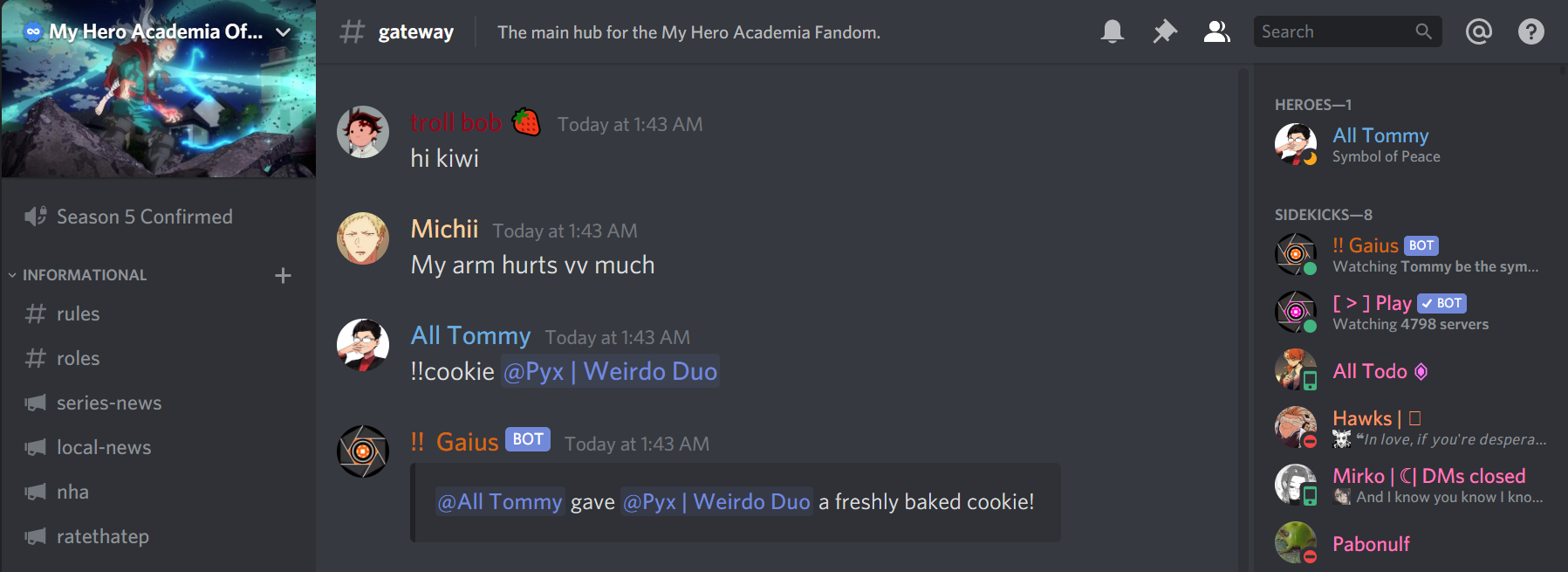

My Hero Academia

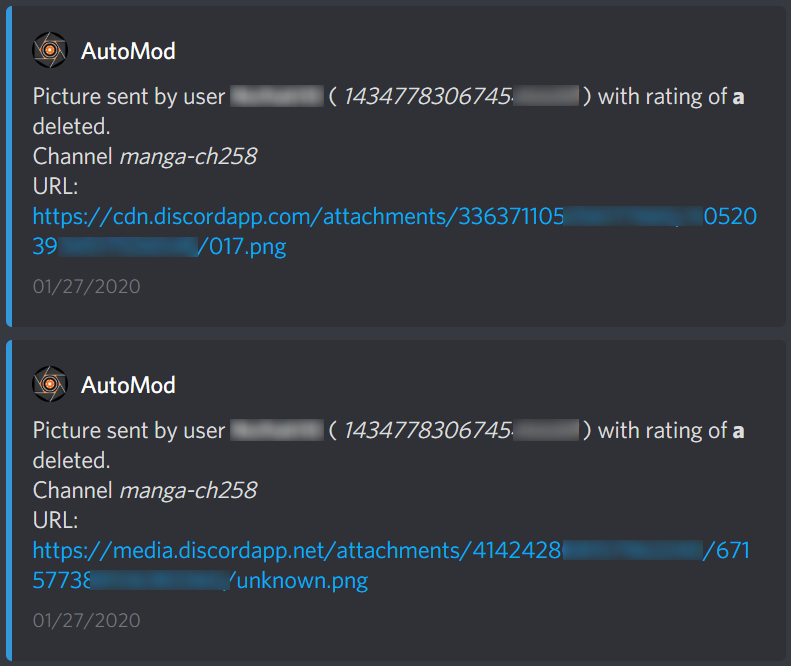

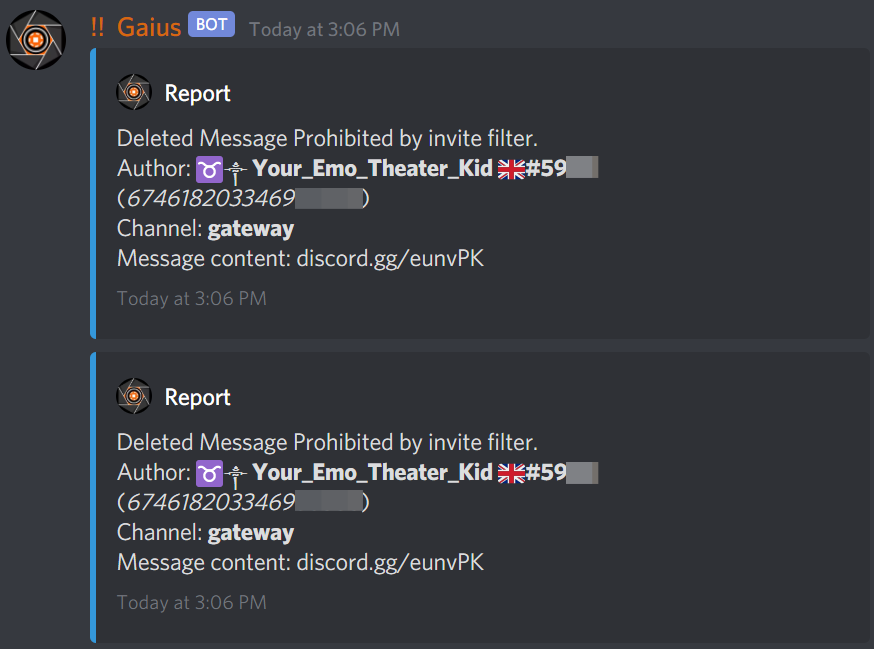

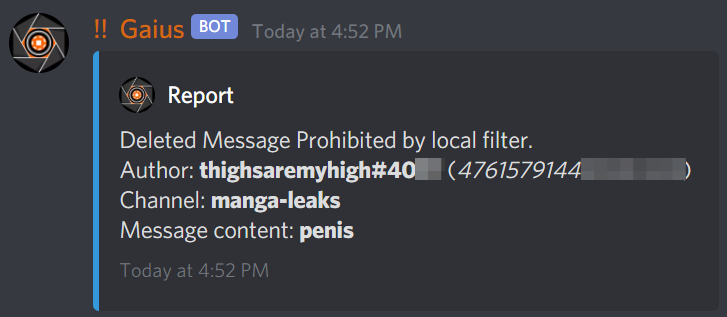

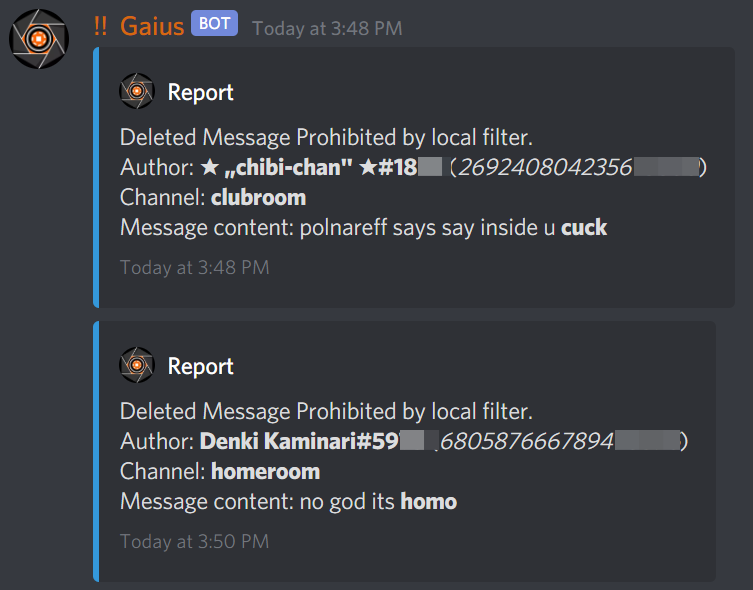

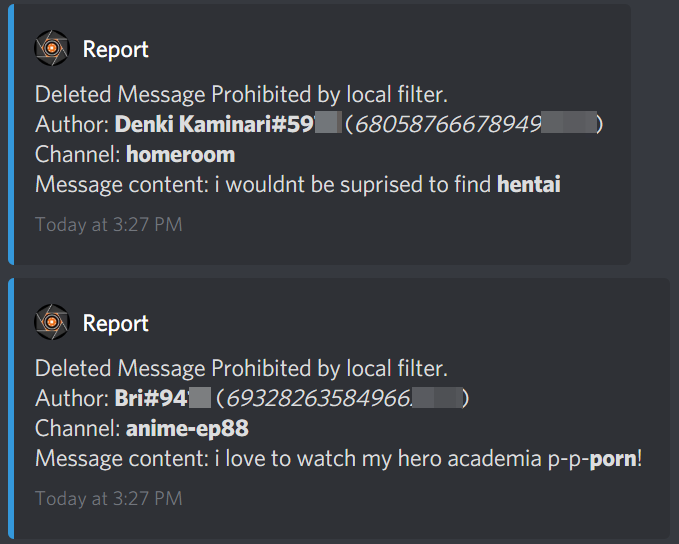

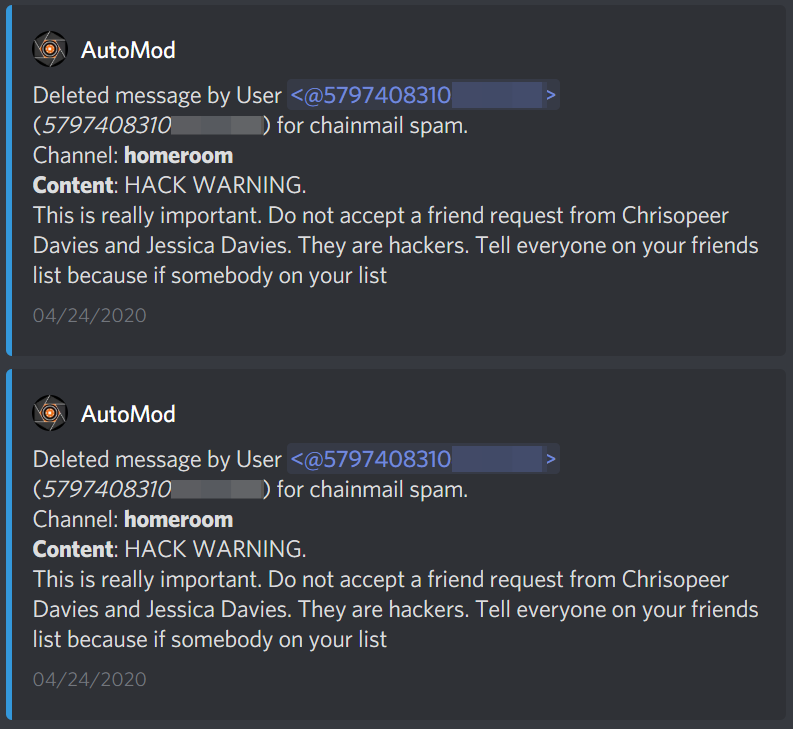

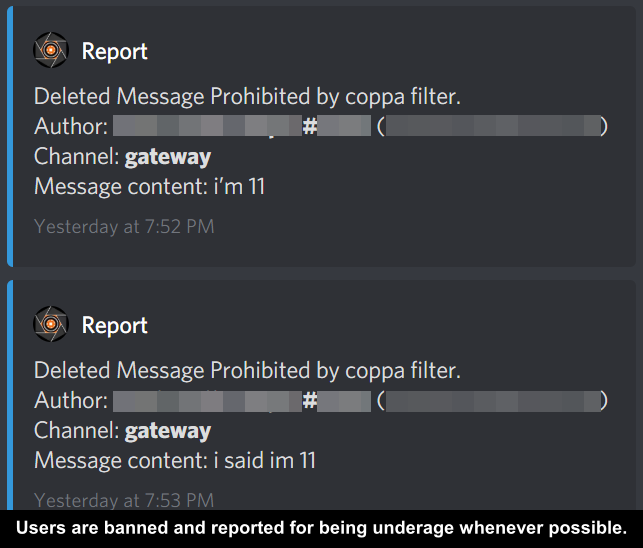

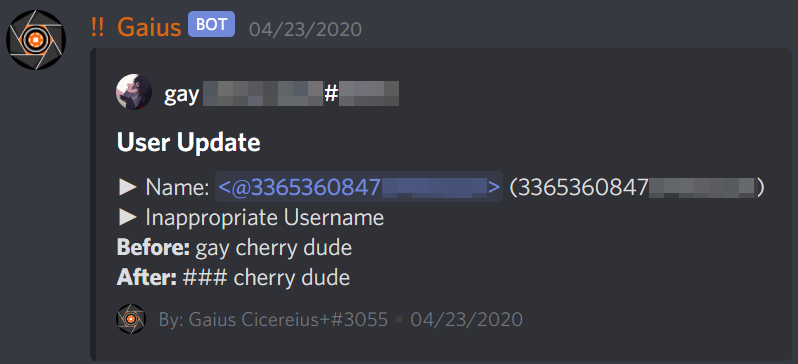

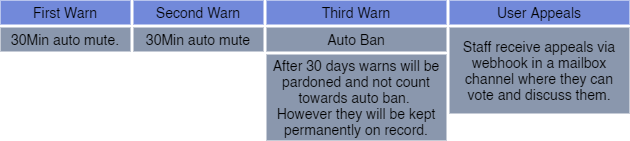

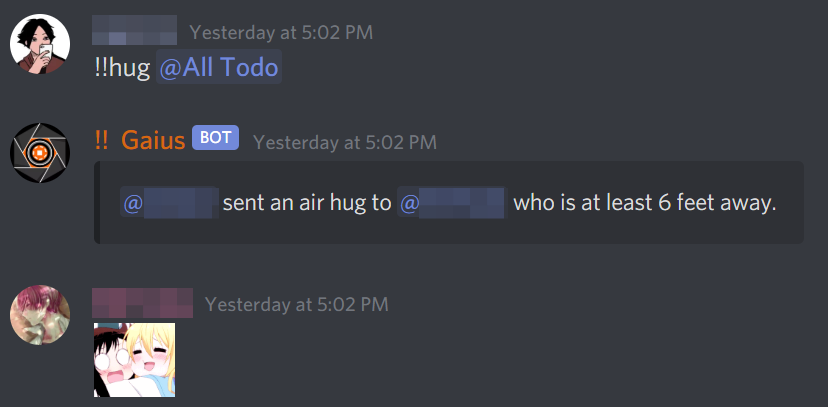

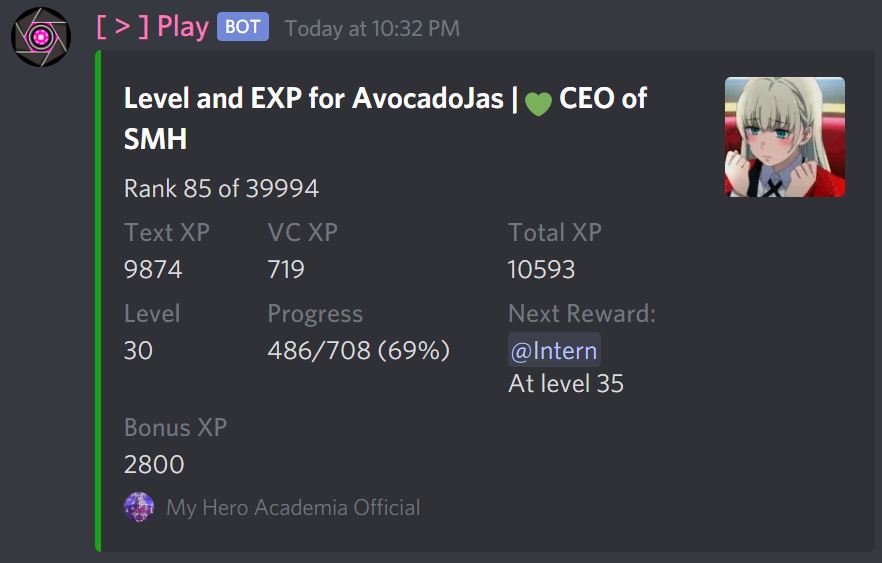

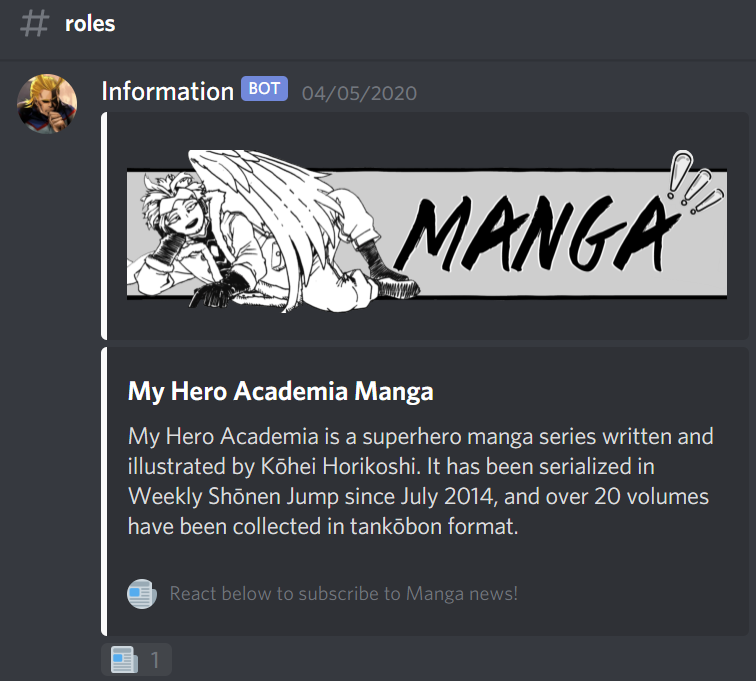

We’re the server for the series My Hero Academia. A superhero manga series written and illustrated by Kōhei Horikoshi. With an active welcoming community, we overflow with an amazing environment free from toxic and NSFW content. Discuss the series and meet fellow fans all over the world!